DEPSO [1], or called DEPS, is an algorithm for (constrained) numerical optimization problem (NOP). DEPSO combines the advantages of Particle Swarm Optimization (PSO) and Differential Evolution (DE). It is incorporated into cooperative group optimization (CGO) system [2].

DEPSO [1], or called DEPS, is an algorithm for (constrained) numerical optimization problem (NOP). DEPSO combines the advantages of Particle Swarm Optimization (PSO) and Differential Evolution (DE). It is incorporated into cooperative group optimization (CGO) system [2].

The DEPSO paper has been cited over 400 times with various applications. DEPSO was also implemented (by Sun Microsystems Inc.) into NLPSolver (Solver for Nonlinear Programming), an extension of Calc in Apache OpenOffice.

Related Information: Please find other related code and software in our Code Library.

License information: DEPSO is free software; you can redistribute it and/or modify it under the Creative Commons Non-Commercial License 3.0.

System Requirements: DEPSO is a platform-independent software developed by JAVA version 1.4 or above.

Command line (examples): $ java DEPSO Problem=<Problem_Name> [NAME=VALUE] …

Version V1.0.004: [Github]

- Allow dynamically accessing problem instance.

Version V1.0.003: [download].

- The adaptive constraints relaxing (ACR) rule [3] might tackle the problem with equality constraints more efficiently than the basic constraint-handling (BCH) rule does.

- Newly introduced parameters: isACR.

Version V1.0.002:

- Bug fixed (Reported by Miklos Espak, Nov 16, 2010): ProblemEncoder.java

Version V1.0.001:

- For boundary-handling, the cycled version [4] instead of the periodic version [5] is considered, so that all new solutions are generated within the original search space, as well as the agents are searching within a virtually infinite space. In addition, the limitation of maximal velocity is no longer required.

Version V1.0.000:

- It implements the original DEPSO algorithm [1].

- Setting parameters: Problem, N, T, Tout, FACTOR, CR, c1, c2, weight.

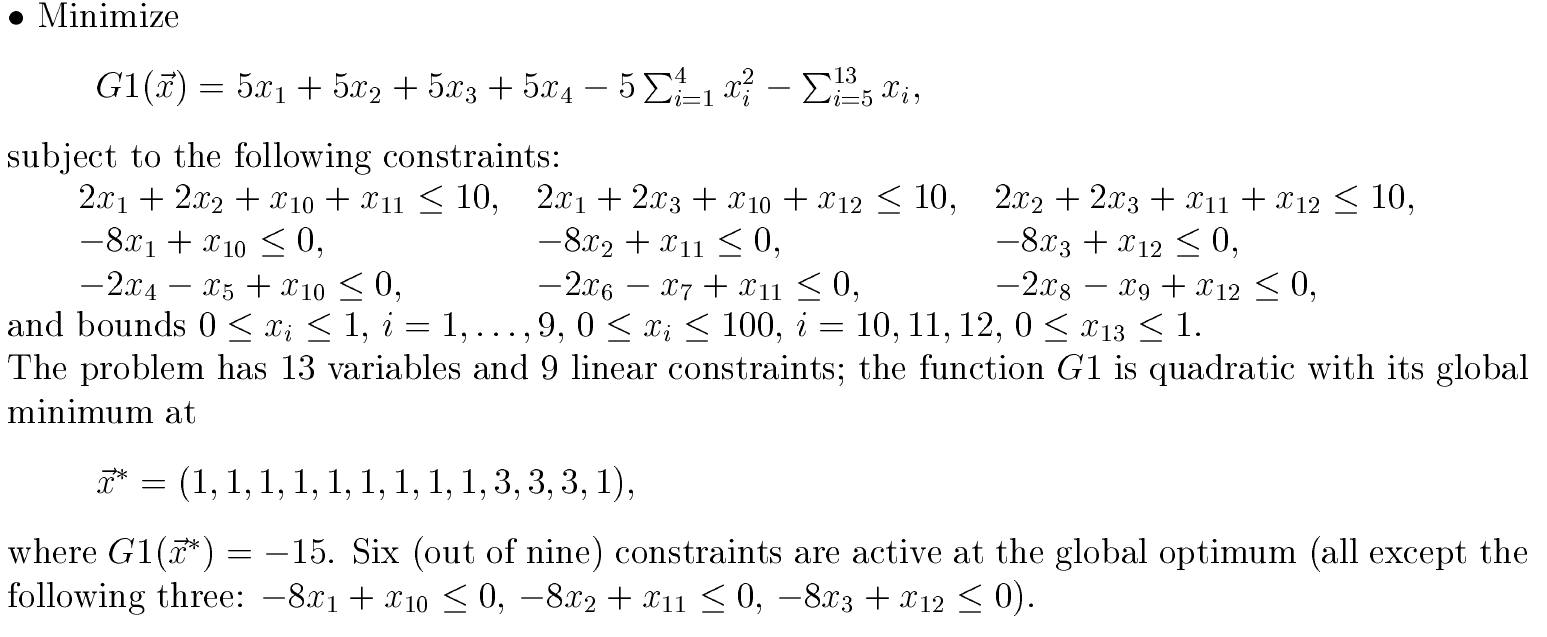

The problem to be solved is (constrained) numerical optimization problem (NOP), including nonlinear programming problems.

To implement your own problem instance, you need create a JAVA source file, normally placed in the directory problem/unconstrained (if the problem has no constraint) or problem/constrained (if the problem has constraints).

Implementation Tips: 1) all the variable bounds must be specified; 2) any equality constraint should be relaxed by a small tolerance value (e.g., ε=1E-4 for problem.constrained.Michalewicz_G3); and 3) problem.ProblemEncoder and problem.UnconstrainedProblemEncoder are the parental classes of all constrained (e.g., problem.constrained.Michalewicz_G1) and unconstrained (e.g., problem.unconstrained.GoldsteinPrice) problems, respectively.

More detailed description on the problem and implementation can be found here.

|

Setting parameters [NAME=VALUE]

|

NAME VALUE_type Range Default_Value Description

Problem String * <Problem_Name> The problem to be solved

//For example: problem.constrained.Michalewicz_G2 is the default value

-----------------------------------------------------------------------------------------

N integer >5 70 The number of agents

T integer >1 2000 The maximum learning cycles

//The total number of evaluation times is about N*T

isACR boolean false Constraint-handling: BCH(false), ACR(true)

//Basic constraint-handling (BCH) rule or adaptive constraints relaxing (ACR) rule

Tout integer >0 100 The output interval (not important)

//The program outputs runtime information of the best solution every "Tout" cycles.

FACTOR real (0, 1.2] 0.5 DE: scale constant

CR real [0, 1] 0.9 DE: crossover constant

//The parameters of DE operator, there are two suggested settings for DE:

// 1) FACTOR=0.5, CR=0.9; 2) FACTOR=0.5, CR=0.1

c1 real [0, 2] 1.494 PSO: learning factor for pbest

c2 real [0, 2] 1.494 PSO: learning factor for gbest

weight real [0, 1] 0.729 PSO: inertia weight

//The parameters of PSO operator, default values: c1=c2=1.494, weight=0.729

[Parsing information]: provide the parsing information for all input parameters.

[Setting information]: show the information of all setting parameters for the algorithm.

[Runtime information]: The program outputs runtime information, i.e., the evaluation values <Vcon, Vopt> of the best solution, at every “Tout” cycles.

//Vopt: the value of objective function; Vcon: the weighted constraint violation value (≥0), which is not outputted if Vcon≡0 since there is no violation

[Summary information]: At the end, it outputs the input variables, response values, and evaluation values <Vcon, Vopt> of the best solution.

@InProceedings{Zhang:2003p3816,

Title = {{DEPSO}: Hybrid particle swarm with differential evolution operator},

Author = {Wen-Jun Zhang and Xiao-Feng Xie},

Booktitle = {IEEE International Conference on Systems, Man, and Cybernetics},

Year = {2003},

PDF={http://www.wiomax.com/team/xie/paper/SMCC03.pdf},

DOI={10.1109/ICSMC.2003.1244483},

Code={http://www.wiomax.com/depso},

Address = {Washington, DC, USA},

Pages = {3816--3821},

Publisher = {IEEE}

}

[2] Xiao-Feng Xie, Jiming Liu, and Zun-Jing Wang. A cooperative group optimization system.

Soft Computing, 18(3): 469-495, 2014.

![[pdf]](http://www.wiomax.com/team/xie/wp-content/plugins/papercite/img/pdf.png)

![[doi]](http://www.wiomax.com/team/xie/wp-content/plugins/papercite/img/doi.png)

![[Bibtex]](http://www.wiomax.com/team/xie/wp-content/plugins/papercite/img/bib.png)

@Article{xie2014cooperative,

Title = {A cooperative group optimization system},

Author = {Xie, Xiao-Feng and Liu, Jiming and Wang, Zun-Jing},

Journal = {Soft Computing},

Year = {2014},

Number = {3},

Pages = {469--495},

Volume = {18},

PDF={http://www.wiomax.com/team/xie/paper/SOCO14.pdf},

DOI={10.1007/s00500-013-1069-8},

Publisher = {Springer}

}

[3] Xiao-Feng Xie, Wen-Jun Zhang, and De-Chun Bi. Handling equality constraints by adaptive relaxing rule for swarm algorithms. In

Congress on Evolutionary Computation (CEC), pages 2012-2016, Portland, OR, USA, 2004.

![[pdf]](http://www.wiomax.com/team/xie/wp-content/plugins/papercite/img/pdf.png)

![[doi]](http://www.wiomax.com/team/xie/wp-content/plugins/papercite/img/doi.png)

![[Bibtex]](http://www.wiomax.com/team/xie/wp-content/plugins/papercite/img/bib.png)

@InProceedings{Xie:2004p2012,

Title = {Handling equality constraints by adaptive relaxing rule for swarm algorithms},

Author = {Xiao-Feng Xie and Wen-Jun Zhang and De-Chun Bi},

Year = {2004},

Pages = {2012--2016},

Booktitle = {Congress on Evolutionary Computation (CEC)},

PDF={http://www.wiomax.com/team/xie/paper/CEC04_ECH.pdf},

DOI={10.1109/CEC.2004.1331143},

Address = {Portland, OR, USA}

}

[4] Xiao-Feng Xie and Jiming Liu. A compact multiagent system based on autonomy oriented computing. In

IEEE/WIC/ACM International Conference on Intelligent Agent Technology (IAT), pages 38-44, Compiegne, France, 2005. IEEE.

![[pdf]](http://www.wiomax.com/team/xie/wp-content/plugins/papercite/img/pdf.png)

![[doi]](http://www.wiomax.com/team/xie/wp-content/plugins/papercite/img/doi.png)

![[Bibtex]](http://www.wiomax.com/team/xie/wp-content/plugins/papercite/img/bib.png)

@InProceedings{Xie:2005p1406,

Title = {A compact multiagent system based on autonomy oriented computing},

Author = {Xiao-Feng Xie and Jiming Liu},

Booktitle = {IEEE/WIC/ACM International Conference on Intelligent Agent Technology (IAT)},

Address = {Compiegne, France},

Year = {2005},

PDF={http://www.wiomax.com/team/xie/paper/IAT05.pdf},

DOI={10.1109/IAT.2005.6},

Pages = {38--44},

Publisher = {IEEE}

}

[5] Xiao-Feng Xie and Wen-Jun Zhang. SWAF: Swarm algorithm framework for numerical optimization. In

Genetic and Evolutionary Computation Conference (GECCO), pages 238-250, Seattle, WA, USA, 2004. Springer.

![[pdf]](http://www.wiomax.com/team/xie/wp-content/plugins/papercite/img/pdf.png)

![[doi]](http://www.wiomax.com/team/xie/wp-content/plugins/papercite/img/doi.png)

![[Bibtex]](http://www.wiomax.com/team/xie/wp-content/plugins/papercite/img/bib.png)

@InProceedings{Xie:2004p238,

Title = {{SWAF}: Swarm algorithm framework for numerical optimization},

Author = {Xiao-Feng Xie and Wen-Jun Zhang},

Booktitle = {Genetic and Evolutionary Computation Conference (GECCO)},

Year = {2004},

PDF={http://www.wiomax.com/team/xie/paper/GECCO04_SWAF.pdf},

DOI={10.1007/978-3-540-24854-5_21},

Address = {Seattle, WA, USA},

Pages = {238--250},

Publisher = {Springer}

}

is the objective function and each

is a constraint function to be satisfied, and

and

are constants. Each function can be nonlinear and non-smooth. If there is no constraint (i.e.,

), then the problem becomes an unconstrained optimization problem.